All Writers Will End Up AI-Maxxing, and This Is Good

Why you should stop fighting the inevitable

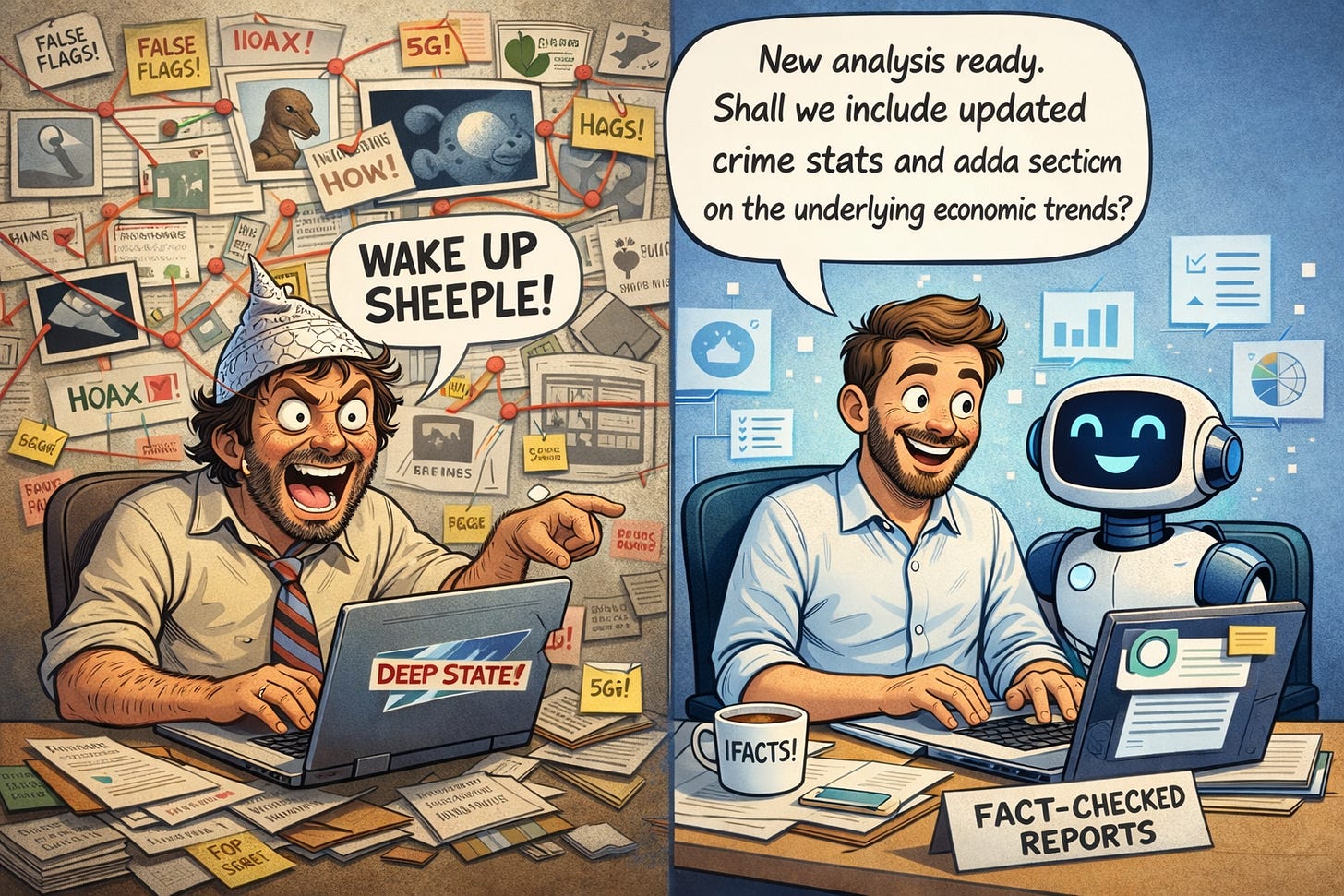

I just published an article in UnHerd about why AI makes me optimistic about the possibility of beginning to counteract some of the negative political impacts of social media. The idea is that as more and more people inclined to conspiracy theories and populism are outsourcing their thinking to LLMs – which evidence suggests is happening – they will get better information and logical reasoning than they could expect from major influencers or trying to “do their own research.” But I’m also optimistic about the smartest writers, journalists, and academics producing much improved work based on this technology. People’s moral intuitions about writers using AI somehow behaving unethically for the most part simply don’t make much sense to me.

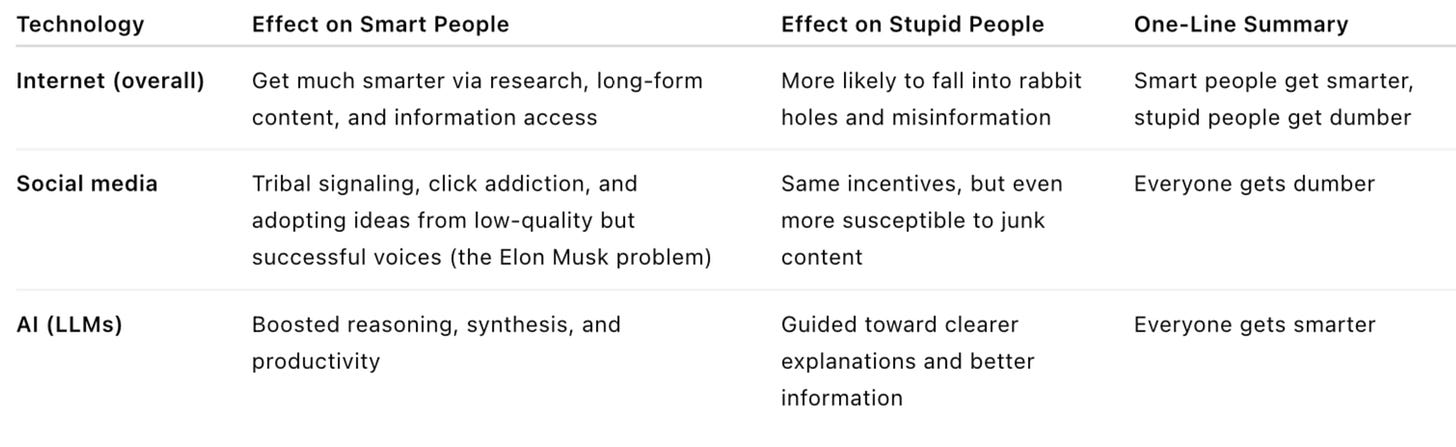

I can put it this way: For people who tend to think illogically and get their facts wrong, AI is better than what they have traditionally used to develop their worldviews. For those who are smart, based in reality, and diligent about getting things correct, LLMs are also a plus. We should therefore expect AI to make society smarter nearly across the board. This is unlike the internet, which I have argued has been good for some people and bad for others. The table below reflects my views.

Here I want to focus on the right side of the bell curve. More specifically, what kind of relationships should writers have with AI?

Note that I’m using “writer” here very broadly, to refer to not only essayists but also journalists and academics.

Imagine some time next year we all start to notice a brilliant young thinker who has a lot of interesting things to say. He seems to be on top of trends, presents new ways of looking at current events, and has a perspective that helps accurately predict what happens in the world. The writing is clear, neither dumbing down the ideas nor presenting them in ways that are inaccessible. When you fact check this person’s claims, they are almost always correct, and when it is called for he owns up to a mistake and issues a correction.

It is then revealed that this person’s writing was all done by AI. How should we react to this news? From my perspective, it shouldn’t really matter, and we should hope that this person continues to do the same kind of work. If their articles are thought provoking and factual, there is no reason that them being written by AI takes away from these qualities. The only argument I see you can make against him is that he was not fully honest with his audience, but this raises the question of why we should care in the first place. Let’s say this writer apologizes for the deception and says he’s going to fully disclose his use of AI from now on, but that his process will remain the same. I think at that point he would still be worth reading.

Maybe the problem is that using AI is not fair to other writers? Banning it would be like prohibiting steroids in athletic competition. But LLMs are available to everyone, and they don’t cause long-term health damage, so this is not the same thing. Some leftists seem to think that we should ban or refuse to use AI because it will take jobs, but this is just classic lump of labor fallacy, and instead of going over why this is the wrong way to view the world, I’m just going to send you here, here, and here.

Another potential issue people bring up is that AI may reduce our ability to think. This seems silly to me. One could’ve said the same about the internet. “Oh, you can just Google something? Doesn’t that rot your brain, when before you would have had to learn the Dewey Decimal System, get a stack of books, and look through them until you found the exact information you want?” Sure, the internet has made a lot of people dumber, but it’s also made the best quality work smarter. I think we should be more optimistic about AI raising the collective intelligence of society as a whole, which would be the opposite impact of social media. If you care about truth and are an intelligent person, there’s no way that immediate factchecking and more access to information won’t improve your work.

Maybe the specific skill of writing itself will atrophy. But skills often atrophy when they’re no longer necessary, and that’s fine. People’s penmanship almost certainly got worse after the invention of word processors. Maybe in a decade, intellectuals are just basically polishing AI content, or editing it to fit their views. Whatever thinking skills writing develops will probably still be exercised by the need to determine what is true or false in the output of AI, packaging ideas, and marketing them.

Some people are bad or slow writers, but there’s no reason that should exclude them from public discourse. I can’t relate to this particular problem. I once had an Ivy League professor tell me he doesn’t know if it’s worth producing op-eds, because each time he has to take a day to do it. I was shocked, as I can write an op-ed on any topic I’m knowledgeable about in an hour, with maybe two hours to get from a blank page to the finished product. I’m not saying this as a theoretical skill; it’s something that I’ve done regularly over the years for some of the most prominent newspapers in the country. There’s no temptation to just put my notes or outline into an LLM and ask for an article, since I don’t really produce notes or outlines except for a few phrases or sentence fragments that I put down to jog my memory, and it would take about as much time to explain to the LLM what kind of article I wanted as it would to just write it myself. It’s hard to imagine this changing even as AI gets better, since writing is simply not my bottleneck for producing essays.

Yet this is a gift, and there may be people out there with ideas just as good who simply don’t have this one particular skill. For them, AI for writing is no different from glasses for those who can’t see well: a technological fix to a natural shortcoming. We shouldn’t shame them for this. We don’t care whether an author used reading glasses when he was researching a book. Similarly, if I knew a writer used AI to help them compose their work, all I would care about is whether it was high quality.

In addition to making writing more accessible for those who have something important to say, AI-maxxing can create new forms of journalism and academic work there wouldn’t otherwise be a market for. Yglesias recently wrote about how AI can produce pretty decent local news coverage during a time when doing it the old-fashioned way makes less economic sense. Sure, you need enough human control to feel safe that the work is accurate, but that is much less expensive than hiring a team of reporters. When it comes to local journalism, we are going to have to choose between AI reporters and not having much news coverage at all. You can imagine something similar with academic work. Archives are increasingly being digitized, and there may not be enough human scholars with the time and expertise to extract what is important from the material. Again, quality control is necessary, but I would have no problem reading an AI book on ancient history or political science if it went through proper factchecking protocols.

I was thinking about this when listening to a Blocked and Reported podcast on recent AI scandals. People have very strong opinions on whether and under what circumstances writers and journalists should use this technology. But the entire discussion strikes me as pointless. Imagine if there was a debate in 1999 over whether authors should use the internet. AI is so beneficial to writers and content creators that people will have no choice but to use it. This is true whether you are producing high quality journalism or academic work, or slop that you want to go viral on X. It’s going to be impossible to resist the temptation to put notes into an LLM and ask for a rough draft, or to ask AI to occasionally structure a sentence better.

When I was in law school, I would edit law review articles by professors, and occasionally I’d see a citation to a newspaper story. This was in an earlier era of the internet, and they would put the page number in there to make it look like they were citing the physical copy. But I could tell they didn’t get it from there because there were sometimes differences in the headline between the paper version and the article posted on the internet. Today, there’s less reason for anyone to pretend that they tracked down a paper copy for a citation. Once newspapers went online, it was unrealistic to expect people to go to a newsstand or library to get the same article they can just make pop up on their computer. Likewise, no one is going to forgo the convenience and productivity-enhancing aspects of AI when it comes to writing, editing, proofreading, and factchecking.

Current AI scandals remind me of discussions over botched plastic surgery. Once in a while, you’ll see someone whose face has been disfigured, and people will use it to advise against getting any work done. But we don’t notice all the people who have gotten facelifts or Botox injections and achieved a natural and more appealing look. I’ve noticed that in recent years, women are staying attractive for much longer, and this is obviously something that should be celebrated. With AI, we similarly hear about instances when things go poorly, while ignoring cases where people are using the tool correctly.

One of the scandals covered by Katie and Jesse is that of the UK populist writer Matt Goodwin. The author’s latest book included fake quotes, which people naturally presume he got from AI. The problem here though isn’t AI, but rather that he is apparently a careless researcher who didn’t know enough about the tool he was using to realize that you need to check its references. I use AI for factchecking and research all the time, but to my knowledge, I’ve never included a fabricated quote in an article, because I always try to track down my sources. Likewise, when it comes to producing legal documents, we hear about lawyers who get caught citing cases that don’t exist, but surely there are many more people in the legal profession using LLMs whose finished work is beyond reproach.

One can imagine true cases of fraud. Let’s say I write a memoir, but I tell ChatGPT to just make stuff up based on some notes and information about me that is available on the internet. This is dishonest and wrong, since people will buy the book because they’re interested in my experiences and how I perceive them. In such cases, using AI should continue to be banned or stigmatized. Perhaps people have similar expectations when it comes to fiction, wanting the author to take them on a personal journey. But what about if someone is writing about housing policy or the national debt? In that case, what matters is whether what is being published is factual and well-argued, not how humans and robots divided the work.

Since I’m calling for AI-maxxing, I’m going to close this article by taking my own advice. I’m done making my points, so instead of writing a closing paragraph myself, I’ve outsourced the job to AI. Putting an earlier version of this article into ChatGPT, I asked it to come up with a 300 word or less closing paragraph that sums up the arguments in the style of the author. Here is what we get:

In the end, the case against AI writing collapses once we focus on what actually matters: truth, insight, and usefulness. If a piece of journalism, scholarship, or commentary is accurate, well-reasoned, and illuminating, the division of labor between human and machine is largely irrelevant. Technologies have always displaced specific skills while expanding the range of people able to contribute—word processors weakened penmanship, search engines replaced library stacks, and online archives transformed research—but each ultimately raised the ceiling of intellectual production. AI promises the same, lowering the barriers to entry for those with ideas but limited writing ability, enabling new forms of local journalism and large-scale academic synthesis, and accelerating fact-checking, editing, and discovery. The real risks are not unique to AI but familiar ones: carelessness, dishonesty, and poor verification. When fabricated quotes or nonexistent cases appear, the problem is sloppy authorship, not the tool itself. We already judge work by its quality rather than whether someone used reading glasses, spell-check, or online databases; AI should be treated no differently. There may remain narrow domains—memoirs, personal narratives, or works marketed as direct expressions of lived experience—where undisclosed AI use would mislead readers. But for analytical writing about policy, history, or public affairs, what ultimately matters is whether the final product is correct and valuable. As with the internet before it, AI will become unavoidable because it makes writers more productive and readers better informed. The sensible response is not to police its use, but to demand higher standards of accuracy and argument, regardless of how the words were produced.

Couldn’t have said it better myself.

Thanks for reading. One thing I’ve learned is that when you have a book coming out, you can never assume that even regular readers are aware of it.

For that reason, over the next few months I’m not going to miss any opportunity to inform my audience that I have a new book called Kakistocracy: Why Populism Ends in Disaster coming out in July – details here. If you enjoy articles like this, appreciate me as a truly independent writer, and would like to support my work, the best way to do so is to preorder the book, which you can do at the links here to Amazon or Barnes & Noble. All preorders count toward opening day sales, and will help determine how much attention it receives.

I will be reading the audiobook, in case that makes it more appealing.

On a different note, if a little box appears below, it means that you are not yet a free or paid subscriber. Sign up to get more articles and updates in the future.

Richard you're getting increasingly long-winded, repetitive, and redundant in the points you are making. You also repeat yourself and say the same thing twice and then it's repetitive and redundant.

Another thing you do is repeat the same argument essentially in a subsequent paragraph. But the topic of that paragraph is the same as the first one.

I don't think it's a coincidence this is happening more with your increased use of AI to coauthor stuff. Actually reading the whole piece becomes a slog. The AI is making you boring.

Imagine how cool it will be when AI can not only write well but come up with ideas all by itself. Writers won't have to do anything!